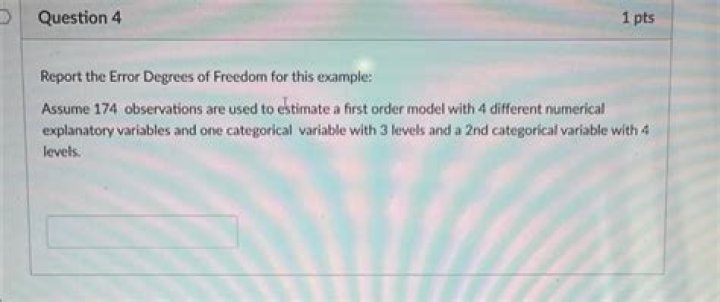

How do you find the error degrees of freedom?

Joseph Russell

Joseph Russell

and the degrees of freedom for error are DFE = N – k \, . MSE = SSE / DFE . The test statistic, used in testing the equality of treatment means is: F = MST / MSE. The critical value is the tabular value of the F distribution, based on the chosen \alpha level and the degrees of freedom DFT and DFE.

What is the degrees of freedom for error?

The error degrees of freedom are the independent pieces of information that are available for estimating your coefficients. For precise coefficient estimates and powerful hypothesis tests in regression, you must have many error degrees of freedom, which equates to having many observations for each model term.

What is the degree of freedom for the error calculated based on the replication?

Each time an experiment is reproduced is called a replicate. The number of replicates for a given experimental design equals R. This can also be defined as the number of degrees-of-freedom for the replicate error. The remaining degrees-of-freedom for lack-of-fit error now become D = N − P − R.

What are the degrees of freedom for the denominator df error?

The denominator degrees of freedom is the bottom portion of the F distribution ratio and is often called the degrees of freedom error. You can calculate the denominator degrees of freedom by subtracting the number of sample groups from the total number of samples tested. Add the number of samples tested in each group.

What is degree of freedom with example?

Degrees of freedom of an estimate is the number of independent pieces of information that went into calculating the estimate. It’s not quite the same as the number of items in the sample. You could use 4 people, giving 3 degrees of freedom (4 – 1 = 3), or you could use one hundred people with df = 99.

What are the degrees of freedom for the F test in a one way Anova?

The F test statistic is found by dividing the between group variance by the within group variance. The degrees of freedom for the numerator are the degrees of freedom for the between group (k-1) and the degrees of freedom for the denominator are the degrees of freedom for the within group (N-k).

How do you find degrees of freedom for Anova?

The degrees of freedom is equal to the sum of the individual degrees of freedom for each sample. Since each sample has degrees of freedom equal to one less than their sample sizes, and there are k samples, the total degrees of freedom is k less than the total sample size: df = N – k.

Why is degree of freedom important?

Degrees of freedom are important for finding critical cutoff values for inferential statistical tests. Because higher degrees of freedom generally mean larger sample sizes, a higher degree of freedom means more power to reject a false null hypothesis and find a significant result.

What is the degrees of freedom for F test?

Degrees of freedom is your sample size minus 1. As you have two samples (variance 1 and variance 2), you’ll have two degrees of freedom: one for the numerator and one for the denominator.

What is degree of freedom explain it?

Degrees of Freedom refers to the maximum number of logically independent values, which are values that have the freedom to vary, in the data sample. Degrees of Freedom are commonly discussed in relation to various forms of hypothesis testing in statistics, such as a Chi-Square.

What are the 12 degrees of freedom?

The degree of freedom defines as the capability of a body to move. Consider a rectangular box, in space the box is capable of moving in twelve different directions (six rotational and six axial). Each direction of movement is counted as one degree of freedom. i.e. a body in space has twelve degree of freedom.

Can F value be less than 1?

When the null hypothesis is false, it is still possible to get an F ratio less than one. The larger the population effect size is (in combination with sample size), the more the F distribution will move to the right, and the less likely we will be to get a value less than one.

Why is ANOVA one tailed F test?

An F-test (Snedecor and Cochran, 1983) is used to test if the variances of two populations are equal. The one-tailed version only tests in one direction, that is the variance from the first population is either greater than or less than (but not both) the second population variance.

What does F stand for in ANOVA?

variation between sample means / variation

F = variation between sample means / variation within the samples. The best way to understand this ratio is to walk through a one-way ANOVA example. We’ll analyze four samples of plastic to determine whether they have different mean strengths.

What is degree of freedom explain?

Is F-test and Anova the same?

ANOVA uses the F-test to determine whether the variability between group means is larger than the variability of the observations within the groups. If that ratio is sufficiently large, you can conclude that not all the means are equal. And that’s why you use analysis of variance to test the means.

What are the 3 degrees of freedom?

Three degrees of freedom (3DOF), a term often used in the context of virtual reality, refers to tracking of rotational motion only: pitch, yaw, and roll.

What would an F value of 1.0 indicate?

If the null hypothesis is true, you expect F to have a value close to 1.0 most of the time. A large F ratio means that the variation among group means is more than you’d expect to see by chance.

What does an F value tell us?

The F statistic just compares the joint effect of all the variables together. To put it simply, reject the null hypothesis only if your alpha level is larger than your p value. Caution: If you are running an F Test in Excel, make sure your variance 1 is smaller than variance 2.

Is an F-test one tailed?

An F-test (Snedecor and Cochran, 1983) is used to test if the variances of two populations are equal. This test can be a two-tailed test or a one-tailed test. The two-tailed version tests against the alternative that the variances are not equal.

The degrees of freedom add up, so we can get the error degrees of freedom by subtracting the degrees of freedom associated with the factor from the total degrees of freedom. That is, the error degrees of freedom is 14−2 = 12. Alternatively, we can calculate the error degrees of freedom directly from n−m = 15−3=12.

The degrees of freedom for the error term for age is equal to the total number of subjects minus the number of groups: 8 – 2 = 6. The degrees of freedom for the Age x Trials interaction is equal to the product of the degrees of freedom for age (1) and the degrees of freedom for trials (4) = 1 x 4 = 4.

What is the corresponding identity for degrees of freedom?

In general, the degrees of freedom of an estimate of a parameter are equal to the number of independent scores that go into the estimate minus the number of parameters used as intermediate steps in the estimation of the parameter itself (most of the time the sample variance has N − 1 degrees of freedom, since it is …

What happens to at as the degrees of freedom increase?

As the degrees of freedom increases, the area in the tails of the t-distribution decreases while the area near the center increases. As a result, more extreme observations (positive and negative) are likely to occur under the t-distribution than under the standard normal distribution.

How many degrees of freedom would you use for biological studies?

However, this should be increased if there is low precision in the measurements taken, and for biological trials, a minimum residual degrees of freedom of 15 is considered appropriate for a useful statistical analysis.

How many degrees of freedom are there in a number?

In fact, the first 9 values could be anything, including these two examples: But to have all 10 values sum to 35, and have a mean of 3.5, the 10 th value cannot vary. It must be a specific number: Therefore, you have 10 – 1 = 9 degrees of freedom.

How are degrees of freedom for replicates defined?

This can also be defined as the number of degrees-of-freedom for the replicate error. The remaining degrees-of-freedom for lack-of-fit error now become D = N − P − R . Any statistical design can be analysed for different sources of error or variability. Consider the design of Table 2.

How are degrees of freedom affected by sample size?

As the sample size (n) increases, the number of degrees of freedom increases, and the t-distribution approaches a normal distribution. Let’s look at another context. A chi-square test of independence is used to determine whether two categorical variables are dependent.

How are the degrees of freedom of a parameter determined?

In general, the degrees of freedom of an estimate of a parameter are equal to the number of independent scores that go into the estimate minus the number of parameters used as intermediate steps in the estimation of the parameter itself (most of the time the sample variance has N − 1 degrees of freedom, since it is computed from N random scores …