What is batch size and learning rate?

Emma Jordan

Emma Jordan

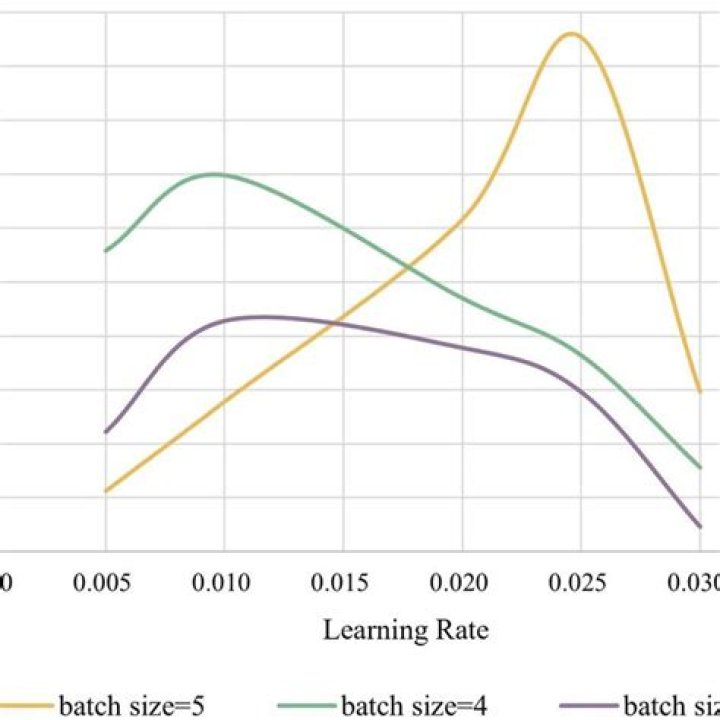

When learning gradient descent, we learn that learning rate and batch size matter. Specifically, increasing the learning rate speeds up the learning of your model, yet risks overshooting its minimum loss. Reducing batch size means your model uses fewer samples to calculate the loss in each iteration of learning.

What does batch size mean?

Batch size is a term used in machine learning and refers to the number of training examples utilized in one iteration. The batch size can be one of three options: Usually, a number that can be divided into the total dataset size. stochastic mode: where the batch size is equal to one.

Is higher batch size better?

higher batch sizes leads to lower asymptotic test accuracy. The model can switch to a lower batch size or higher learning rate anytime to achieve better test accuracy. larger batch sizes make larger gradient steps than smaller batch sizes for the same number of samples seen.

How does batch size work?

The batch size is a number of samples processed before the model is updated. The number of epochs is the number of complete passes through the training dataset. The size of a batch must be more than or equal to one and less than or equal to the number of samples in the training dataset.

How do I choose a batch size?

The batch size depends on the size of the images in your dataset; you must select the batch size as much as your GPU ram can hold. Also, the number of batch size should be chosen not very much and not very low and in a way that almost the same number of images remain in every step of an epoch.

What is a good batch size?

In general, batch size of 32 is a good starting point, and you should also try with 64, 128, and 256. Other values (lower or higher) may be fine for some data sets, but the given range is generally the best to start experimenting with.

What batch size should I use?

What is minimum batch size?

Minimum Batch Size means the minimum total number of Wafers in a Process Batch for a particular Product.

How do I determine batch size?

The batch setup cost is computed simply by amortizing that cost over the batch size. Batch size of one means total cost for that one item. Batch size of ten, means that setup cost is 1/10 per item (ten times less). This causes the decaying pattern as batch size gets larger.

What is a good Minibatch size?

So the minibatch should be 64, 128, 256, 512, or 1024 elements large. The most important aspect of the advice is making sure that the mini-batch fits in the CPU/GPU memory! If data fits in CPU/GPU, we can leverage the speed of processor cache, which significantly reduces the time required to train a model!

How important is batch size?

The number of examples from the training dataset used in the estimate of the error gradient is called the batch size and is an important hyperparameter that influences the dynamics of the learning algorithm. Batch size controls the accuracy of the estimate of the error gradient when training neural networks.

Why is batch size power of 2?

4 Answers. This is a problem of alignment of the virtual processors (VP) onto the physical processors (PP) of the GPU. Since the number of PP is often a power of 2, using a number of VP different from a power of 2 leads to poor performance.

What is the minimum batch size?

Minimum Batch Size means the minimum total number of Wafers in a Process Batch for a particular Product. Sample 2. Sample 3. Minimum Batch Size means the minimum quantity of Corrective Ophthalmic Lenses that can be cost effectively produced by Respondent in a single operation, which shall not exceed 150 lenses. Sample …

How do I choose a good batch size?

Can batch size be power of 2?

preferable yes. CPU and GPU memory architecture usually organizes the memory in power of 2. (check page size in your CPU by getconf PAGESIZE in Linux) For efficiency reason it is good idea to have mini-batch sizes power of 2, as they will be aligned to page boundary. This can speed up the fetch of data to memory.

Does batch size need to be power of 2?

Does batch size increase time?

Moreover, by using bigger batch sizes (up to a reasonable amount that is allowed by the GPU), we speed up training, as it is equivalent to taking a few big steps, instead of taking many little steps. Therefore with bigger batch sizes, for the same amount of epochs, we can sometimes have a 2x gain in computational time!

What batch size should I choose?

What is the power of two in math?

A power of two is a number of the form 2n where n is an integer, that is, the result of exponentiation with number two as the base and integer n as the exponent. Written in binary, a power of two always has the form 100…000 or 0.00… 001, just like a power of 10 in the decimal system.

What size should Batch?