Why my accuracy is not changing?

Robert Harper

Robert Harper

The most likely reason is that the optimizer is not suited to your dataset. Here is a list of Keras optimizers from the documentation. I recommend you first try SGD with default parameter values. If it still doesn’t work, divide the learning rate by 10.

Why is my validation accuracy decreasing?

Overfitting happens when a model begins to focus on the noise in the training data set and extracts features based on it. This helps the model to improve its performance on the training set but hurts its ability to generalize so the accuracy on the validation set decreases.

How do you fix fluctuating validation accuracy?

Possible solutions:

- obtain more data-points (or artificially expand the set of existing ones)

- play with hyper-parameters (increase/decrease capacity or regularization term for instance)

- regularization: try dropout, early-stopping, so on.

Is it possible to have 100% accuracy?

It is possible to get 100% accuracy. To do it, use an interpolation polynomial of degree if you have samples.

Does increasing epochs increase accuracy?

Accuracy decreases as epoch increases #1971.

How do you improve test accuracy?

Now we’ll check out the proven way to improve the accuracy of a model:

- Add more data. Having more data is always a good idea.

- Treat missing and Outlier values.

- Feature Engineering.

- Feature Selection.

- Multiple algorithms.

- Algorithm Tuning.

- Ensemble methods.

How do I fix overfitting?

Handling overfitting

- Reduce the network’s capacity by removing layers or reducing the number of elements in the hidden layers.

- Apply regularization, which comes down to adding a cost to the loss function for large weights.

- Use Dropout layers, which will randomly remove certain features by setting them to zero.

How do I fix Overfitting?

Handling overfitting

- Reduce the network’s capacity by removing layers or reducing the number of elements in the hidden layers.

- Apply regularization , which comes down to adding a cost to the loss function for large weights.

- Use Dropout layers, which will randomly remove certain features by setting them to zero.

Why is my training accuracy fluctuating?

Your learning rate may be big, so try decreasing it. The size of validation set may be too small, such that small changes in the output causes large fluctuations in the validation error.

Why do I get 100% accuracy?

You are getting 100% accuracy because you are using a part of training data for testing. At the time of training, decision tree gained the knowledge about that data, and now if you give same data to predict it will give exactly same value.

Can random forest giving 100 accuracy?

I just created my first working RandomForest classification ml model. It works amazingly well no error and accuracy is 100%.

Are too many epochs overfitting?

Too many epochs can lead to overfitting of the training dataset, whereas too few may result in an underfit model. Early stopping is a method that allows you to specify an arbitrary large number of training epochs and stop training once the model performance stops improving on a hold out validation dataset.

What is problem with Overfitting?

Overfitting refers to a model that models the training data too well. This means that the noise or random fluctuations in the training data is picked up and learned as concepts by the model. The problem is that these concepts do not apply to new data and negatively impact the models ability to generalize.

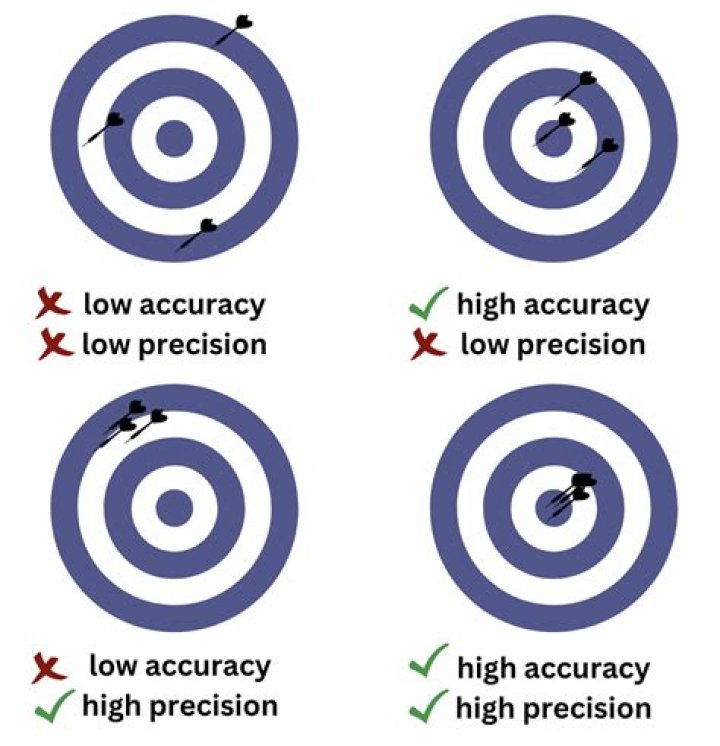

How do I know if my model is Overfitting?

Overfitting can be identified by checking validation metrics such as accuracy and loss. The validation metrics usually increase until a point where they stagnate or start declining when the model is affected by overfitting.

How do I fix Overfitting and Underfitting?

In addition, the following ways can also be used to tackle underfitting.

- Increase the size or number of parameters in the ML model.

- Increase the complexity or type of the model.

- Increasing the training time until cost function in ML is minimised.

How do you improve validation accuracy?

2 Answers

- Use weight regularization. It tries to keep weights low which very often leads to better generalization.

- Corrupt your input (e.g., randomly substitute some pixels with black or white).

- Expand your training set.

- Pre-train your layers with denoising critera.

- Experiment with network architecture.

What is training accuracy and validation accuracy?

In other words, the test (or testing) accuracy often refers to the validation accuracy, that is, the accuracy you calculate on the data set you do not use for training, but you use (during the training process) for validating (or “testing”) the generalisation ability of your model or for “early stopping”.

Is it possible to get 100 percent accuracy in Perceptron?

If your neural network got the line right, it is possible it can have a 100% accuracy.

How do I reduce Overfitting random forest?

1 Answer

- n_estimators: The more trees, the less likely the algorithm is to overfit.

- max_features: You should try reducing this number.

- max_depth: This parameter will reduce the complexity of the learned models, lowering over fitting risk.

- min_samples_leaf: Try setting these values greater than one.

Why is my model giving 100 percent accuracy?

You are getting 100% accuracy because you are using a part of training data for testing. At the time of training, decision tree gained the knowledge about that data, and now if you give same data to predict it will give exactly same value. That’s why decision tree producing correct results every time.